Towards a human and robotic society

65 years ago, writer Isaac Asimov presented in the story Runaround three laws for robotics: 1) The robot will not harm the human being and prevent man from being damaged; 2) the robot will comply with man-given orders, unless it is opposed to the first law; and 3) the robot will protect itself if it does not go against the first and second law.

Asimov embodied in the coexistence of people and robots in his science fiction stories, and for that coexistence he saw it necessary to program robots according to these laws. But this world is no longer so fictitious: autonomous vehicles, drones, robots to help the elderly and the disabled, everyone who enters the industry and machines capable of learning.

“It is undeniable that a revolution is taking place,” explains Elena Lazkano Ortega, a researcher at the UPV’s Department of Computer Science and Artificial Intelligence. However, it is not so clear how fast this revolution will reach: “Sometimes it seems to me that it is there, that it comes over us, and other times the advances are enormously slow.” Gorka Azkune Galparsoro, artificial intelligence researcher at Deustotech-Deusto: “From the point of view of artificial intelligence there has been a great leap that has not been seen for many years. It is very difficult to predict how far things are going to change, but I think that from here to not too many years, it will have a great influence on our life.”

Given this situation it will take more than the laws of Asimov, and countries like the US, China, Japan or the European Union have already begun to address the issue. Azkune believes that legislation is already necessary in some cases. “For example, in the US there are several states that are developing legislation on autonomous vehicles. This technology is already very mature.” But he also sees the danger of advancing too much in legislation: “I am afraid to limit possible legislative developments. We have to prepare, that’s clear, but I don’t know how far we can go.” Well, to do things right, Lazkano sees a deep debate necessary: “It is a debate in which we all have to participate, industry, universities and even society. We cannot leave it in the hands of governments only to make legislation.”

Last February the European Parliament asked the European Commission to propose laws for robotics and artificial intelligence. This petition was based on the study of the civil laws of robotics extracted by the European Law Commission. In Britain, the British Organization BSI Standard also launched last year the standards for the ethical design of robots. As an update to Asimov's laws, they have also highlighted three general points: 1) Robots should not be designed alone or primarily to kill humans; 2) Those responsible for the actions of robots are human, not robots; and 3) It should be possible to know who is responsible for each robot and its actions.

In addition to people's safety, they have focused on concerns. Lazkano agrees: “Robots are designed by us and at the moment the actions of robots. And to the extent that this is so, we are responsible. For example, autonomous vehicles need a supervisor at the moment. The buyer of the car will buy it under certain conditions and, in case of accident, if he has respected these conditions, the responsible will be the designer, and if he has not respected the conditions, the user. We cannot blame him for the error. That would be justifying us.”

Learning capacity

But, according to Azkun, it may not always be so clean. “Robots are often thought to be programmed to do one thing. But today robots are able to learn and probably more in the future. And learning capacity can generate decision-making autonomy. So, as these robots are learning on their own, who is responsible for what they learn? In short, if they learn from us, we will be responsible, but that responsibility can be very diluted in society.”

Compare what happens to people: “A child at first knows nothing. If he then becomes a murderer, who does he correspond to? Surely society has made some mistakes, or others... In this case that person is blamed. Then you have to see it... From a deterministic or programmatic point of view it can be easier, but artificial intelligence is free, and with the strength that learning is acquiring, these things can probably not be solved so easily.”

However, the learning capacity of artificial intelligence remains limited. “At the moment they ingest data, they learn what is in them and they only have a certain generalization capacity,” explains Lazkano. “Thus, if the data provided to them is ethical, the outputs will also be ethical.” But, of course, it will not always be easy for the data provided to be ethical, for example, when using Internet data. Proof of this is what happened with the Microsoft Tay chat: They got on Twitter and had to withdraw racist, sexist, Nazi comments, etc. in 24 hours. Of course, he learned from interviews on twitter.

“This is called the problem of the open world,” says Azkun. “If we open the world to artificial intelligences and let all data be taken, problems could arise. That can be controlled, but how long do we want these intelligences to be more and more powerful?” As the ability to learn increases, things could change. “Google’s Deep Mind already speaks of artificial general intelligence,” says Azkune. An artificial general intelligence would be able to make decisions on its own. “Today, artificial intelligence is quite controlled based on the data and learning technique you use, but as we move forward and have more freedom to decide these intelligences, it will not be so easy to control what they can learn.”

But Azkun clarifies that this is far from happening: “In recent years there have been great advances, and if we project them into the future a quite different world could appear, but we are not yet so advanced in this regard. We are still at the level of specialization: we have small intelligences that are very good doing concrete things, but that generality that human beings have is not close. It is true that precisely this year small advances have been made in general intelligence. But we are still in very basic research, which can come, but we don’t know.”

Human hands

Lazkano is clear that robots are ethical in the hands of human beings. “If we don’t teach them ethical behaviors, the robots we make won’t have ethical behavior.” And we must not forget what they are. “We should definitely think about what use we want to give you. But they are just tools. Tools to do a thousand things, also to speak, but they are tools”.

Azkun also highlighted this perspective. “We have to keep in mind that, like any technology, it can have contras, and we have to try to prevent them, but not be afraid. This technology is yet to be developed, as let us think about how we have used it to solve real problems. For example, artificial intelligence has already been used to detect skin cancer and makes it better than man. There could be another thousand cases. Cancer therapy or the new medicine can generate artificial intelligence or predict its effect. We should think about those things. How we can use these new powers to address the problems we have. But, of course, we must always think that this should not become a problem."

For example, it is being discussed whether creating emotional links with robots can become a problem, for example. Lazkano believes this is inevitable: “Fetishism is its own. We tend to create emotional links with objects and robots alike.” And often it can be for good: “Research has shown that the emotional ties that autistic children, older people and others have with robots are beneficial to them. For example, the elderly who live alone appreciate having a robot at home and, although today they are very limited, it has been seen that an emotional link is created with these robots, which is good for them. They feel safer at home, they are better emotionally, etc. In other cases it can be a problem, but it also has benefits.”

And another problem that worries, and that may be closer, is the elimination of jobs by robots. “There are jobs that are going to be lost, but these workers may be able to do other jobs and robots generate new professions,” says Lazkano. “I don’t know how violent it will be. That fear has brought us into other industrial revolutions.”

Azkune matches: “This has happened many times in our history and I think we will gradually find our place. There will always be a transition phase in which people go wrong. But let us not think that these people, as they always say, are the least formed people. I do not rule out at all that artificial intelligence is capable of creating theories, of proposing tests and experiments, etc., and in that case taking away much work from scientists. We do not know in what sense the changes will come and we all have to adapt to them.”

“And if a society comes in which everything is automated and man doesn’t have to work, it doesn’t seem to me negative,” Azkune added. “We will have to evolve into another kind of society.”

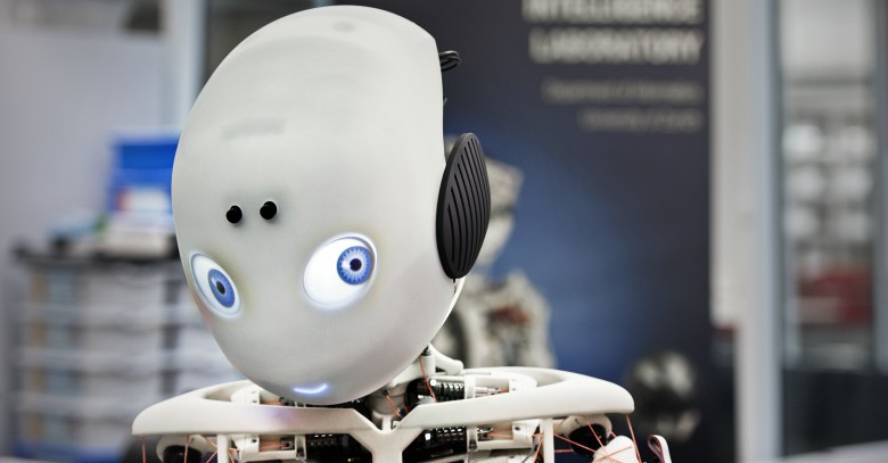

A society of people and robots

This possible society would be a society of people and robots. Lazkano believes that within about ten years robots can be in houses, but from there to robots being part of society there is a bleeding. “Maybe it comes. One of the most important keys would be to achieve our capacity for dialogue. And there are machines capable of having a consistent conversation for a couple of minutes, but you immediately realize that you are working with a machine. You’ll have a machine at home, not a friend or whatever.”

We will have to see at what level robots or artificial intelligences arrive. “I am clear that if they reach or exceed the level of human beings, they will have to be treated as equals in legal and ethical responsibilities. If a robot commits a murder, it must be judged as a person or with similar mechanisms. If we recognize that they have freedom of choice and ability to learn, etc., we must consider them equal in that sense. The key is the level of intelligence that is achieved. They should be treated accordingly. Currently, animals do not have the same treatment as humans, neither a child nor an adult. Many questions will arise and much work will have to be done if it happens.”